A late present under the tree: Why Nvidia’s potential China chip push matters more than holiday cheer

Imagine waking up after the holidays to learn a company you already loved just found a way to add billions to next year’s revenue outlook — and the market’s mood changes overnight. That’s the vibe around Nvidia right now, after multiple reports in late December 2025 that it has sounded out Taiwan Semiconductor Manufacturing Co. (TSMC) to ramp up production of its H200 AI chips to meet surging Chinese demand.

This isn’t just another supply-chain footnote. It’s a story that ties together geopolitics, export policy, product lifecycle management, and the very real question investors keep asking: can Nvidia keep turning AI momentum into sustainable profits?

Why this news grabbed headlines

- Reuters reported on December 31, 2025 that Nvidia has asked TSMC about boosting H200 output because Chinese technology firms have reportedly placed more than 2 million H200 orders for 2026, while Nvidia’s on-hand inventory sits near 700,000 units. (reuters.com)

- The H200 is a high-performance Hopper-architecture GPU built on TSMC’s 4nm process and is positioned well above the H20 variants previously permitted for China. The potential sales could recapture some of the revenue Nvidia lost during export restrictions and inventory writedowns earlier in the year. (reuters.com)

- The reports are sourced to anonymous insiders and Reuters’ coverage makes clear regulatory and approval steps — particularly in China and via U.S. licensing — remain unresolved. That means upside exists, but risks and execution hurdles are material. (reuters.com)

Quick snapshot of the backdrop

- 2025 saw Nvidia enjoy strong AI-driven gains early in the year (the stock rose substantially year-to-date), but the second half cooled as investors worried about growth sustainability, supply constraints, and geopolitically driven trade frictions. (aol.com)

- U.S. export policy earlier in 2025 had constrained Nvidia’s ability to ship its most powerful chips into China; the company developed China-specific variants (like H20) to address that market. Later policy shifts introduced limited pathways for H200 shipments under license and with fees, reopening a big demand pool. (investing.com)

- Chinese hyperscalers and internet firms — reportedly including ByteDance-sized buyers — are aggressively expanding AI infrastructure spending, making China an addressable and lucrative market if regulatory approvals and supply can be aligned. (reuters.com)

What this could mean for Nvidia (and investors)

- Near-term revenue relief: Filling a 2-million-unit order book (even partially) at H200 price points would be a multi-billion-dollar revenue boost that could help reverse the inventory write-downs Nvidia took earlier and improve near-term cash flow. (reuters.com)

- Supply balancing act: Ramping H200 production while launching/expanding Blackwell and Rubin series chips globally requires careful capacity planning. Prioritizing one market could tighten supply elsewhere and affect pricing and customer relationships. (investing.com)

- Regulatory and political risk: Even with U.S. approvals loosening in specific ways, shipments to China still require licenses and potentially conditions (tariffs, bundling with domestic chips, or limits). Beijing’s own approval pathways could further complicate delivery. Execution risk is high. (reuters.com)

- Valuation sensitivity: Markets have already priced a lot of AI optimism into Nvidia. Concrete evidence that China demand translates into recognized sales and margin recovery would justify further re-rating; conversely, delays or regulatory blocks could trigger renewed volatility. (finance.yahoo.com)

A few practical scenarios to watch in early 2026

- Official confirmations: Nvidia or TSMC comments confirming new H200 production orders or schedules would materially reduce uncertainty.

- Regulatory signals: U.S. Commerce Department license approvals and any Chinese import approvals (or conditions) will be immediate market catalysts.

- Delivery timing: Reports that initial shipments will arrive before the Lunar New Year (mid-February 2026) would accelerate revenue recognition expectations — but failure to meet such timing would raise execution questions. (investing.com)

Points investors should keep top of mind

- This story is a high-upside, high-uncertainty event: the potential gains are real, but so are regulatory and supply risks.

- Nvidia’s strategic play is logical: retain developer mindshare in China and prevent customers from migrating to domestic alternatives while also protecting global product roadmaps.

- Market reaction will depend on the clarity of confirmations — rumors lift sentiment, but confirmed orders and deliveries move the needle on fundamentals.

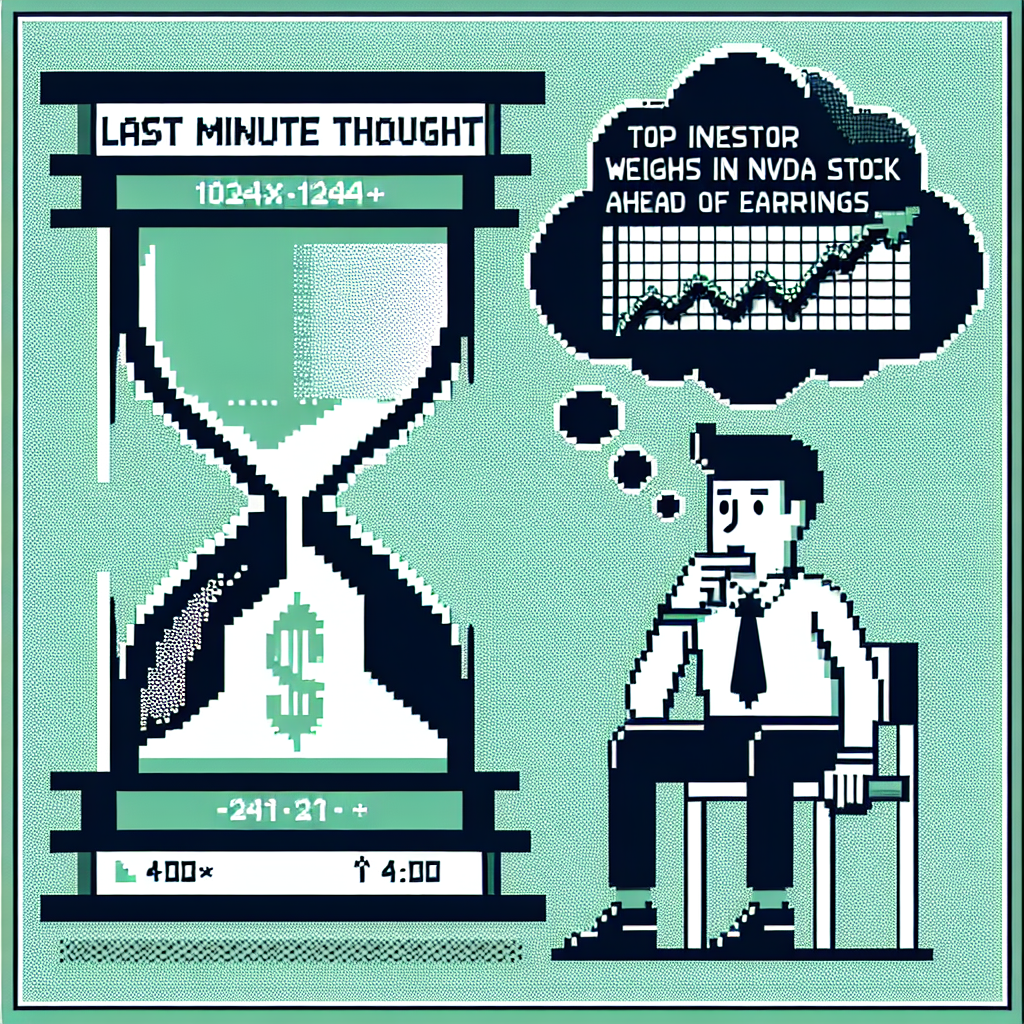

Final thoughts

Nvidia sounding out TSMC to boost H200 output is the kind of development that can flip a narrative: from “AI hype run” to “execution that converts enormous demand into actual revenue.” Still, investors should treat late-December reports as the start of a story, not the ending. The coming weeks — regulatory approvals, official company statements, and any first shipment confirmations — will be the proof points that determine whether this “late Christmas gift” truly arrives or remains an exciting, but unrealized, possibility.

If you’re following Nvidia for its AI leadership and revenue upside, watch the supply-and-regulatory milestones closely. They’ll tell you whether this is a material new chapter in the company’s growth or another tantalizing but tentative headline.

Sources

Nvidia sounds out TSMC on new H200 chip order as China demand jumps, Reuters, December 31, 2025.

https://www.reuters.com/world/china/nvidia-sounds-out-tsmc-new-h200-chip-order-china-demand-jumps-sources-say-2025-12-31/ (reuters.com)Nvidia Is About to Deliver a Huge Late Christmas Gift to Investors, AOL / 24/7 Wall St., December 2025 (republished Yahoo/AOL coverage of Reuters reporting).

https://www.aol.com/finance/nvidia-deliver-huge-christmas-gift-094437235.html (aol.com)Nvidia considers increasing H200 output after U.S. export policy updates, Reuters, December 2025 (earlier reporting and context).

https://www.reuters.com/technology/exclusive-nvidia-considers-increasing-h200-chip-output-due-robust-china-demand-sources-say-2025-12-14/ (investing.com)ByteDance potential Nvidia spend in 2026, Reuters, December 31, 2025 (context on big Chinese buyers).

https://www.reuters.com/world/asia-pacific/bytedance-spend-about-14-billion-nvidia-chips-2026-scmp-reports-2025-12-31/ (reuters.com)